Most advice on this topic is lazy.

People act like the problem is AI itself. It isn’t. The core problem is generic, unverifiable writing. A bad resume is still bad if a human wrote it by hand. A strong resume is still strong if AI helped tighten the wording.

That matters because hiring managers are asking the wrong question. Not “Was AI involved?” Ask, “Does this resume prove real competence, or does it just mimic professional-sounding language?” That is how to tell if a resume is ai-generated in any useful sense.

The market has already shifted. Recruiters are dealing with polished, standardized applications that sound impressive but collapse under scrutiny. AI generated resume bullets sound fake, if not done right. If you want a broader recruiter-side view of that shift, Parakeet-AI's blog is one of the better places to track how screening and verification are changing.

The practical issue is simple. AI makes it easier to produce resumes that look finished before they become truthful. That is why so many candidates get ignored. The resume reads clean, but says nothing. If that sounds familiar, this piece on why your resume is being ignored and how to fix it gets at the same root problem.

The Problem with AI Resumes Isnt the AI

The wrong hiring filter creates the wrong hiring outcome.

If you treat AI use as the offense, you will miss the core problem. The offense is a resume that sounds finished before it says anything true. I do not care whether a candidate used ChatGPT, Grammarly, or a sharp human editor. I care whether the document shows specific proof, clear ownership, and a career story that holds up when you question it.

That standard matters because AI creates an authenticity paradox. Good candidates use it to tighten language, fix weak phrasing, and turn rough experience into readable copy. Weak candidates use it to hide thin experience behind polished language. Same tool. Very different signal.

Generic is the enemy

AI did not invent generic resumes. It made them cheaper and faster to produce.

Before AI, candidates copied job descriptions, bought stale templates, or paid resume writers who stuffed every bullet with vague corporate language. Now the same weak content can be generated in minutes and polished enough to pass a quick skim. That is why detection alone is a dead-end strategy. The better question is whether the resume contains details a real person would know and defend.

A credible resume names the work. It names the context. It gives you enough texture to test the claim.

If a bullet says someone “improved operations,” I want to know what operation, what broke, what changed, and who felt the result. If the answer is still mush after a follow-up question, the candidate either outsourced the story or never owned the work.

The authenticity paradox

Ethical AI assistance improves clarity. Deceptive AI use replaces evidence.

That is the line.

A candidate who uses AI to clean up sentence structure is doing basic editing. A candidate who uses AI to invent metrics, exaggerate ownership, or add strategic language they cannot explain is creating risk for you. The fix is not banning AI. The fix is requiring claims that can survive verification.

Do not ask whether AI touched the resume. Ask whether the candidate can explain every bullet without hiding behind polished wording.

That approach is more useful for candidates too. Strong applicants should stop trying to sound impressive and start making their experience easier to verify. If they need help, they should use AI the same way they would use an editor. Tighten the language, then ground every line in real work. That is also the standard behind writing resume bullet points that show ownership and outcomes.

What authentic resumes still do

Real resumes leave traces of actual work. Lazy AI strips those traces out.

Look for:

-

Specific tools and systems: not “data platforms,” but Snowflake, Looker, SAP, HubSpot

-

Real operating conditions: a migration deadline, a small team, a messy handoff, an audit, a legacy codebase

-

Believable ownership: owned a module, handled escalations, trained new hires, ran reporting for one region

-

Concrete outcomes: what improved, who benefited, and what tradeoff was involved

A fake-sounding resume rounds everything into the same safe language. “Drove strategic initiatives.” “Collaborated cross-functionally.” “Used data to inform decisions.”

That is not proof. It is cover.

And that is the point hiring teams keep missing. The issue is not AI on the page. The issue is whether the page contains a story with enough detail, limits, and accountability to trust the person behind it.

Spotting the Obvious Red Flags of Lazy AI

The fastest way to spot lazy AI is not detection software. It is reading for missing judgment.

Weak resumes sound polished before they sound true. They use high-status language to cover low-information writing, and they flatten real work into generic claims that could belong to anyone.

The language sounds polished and vacant

Lazy AI does not fail because it writes badly. It fails because it writes safely.

You see the same padded phrasing again and again. “Cross-functional collaboration.” “Strategic initiatives.” “Operational efficiency.” “Results-driven professional.” The words sound expensive. The candidate’s actual contribution disappears.

Treat these as warning signs:

-

Buzzword piles: “strategic,” “proactive,” “dynamic,” “forward-thinking,” “results-oriented”

-

Weak verbs hiding ownership: “supported,” “assisted,” “helped drive,” “contributed to”

-

Missing concrete nouns: no tools, no systems, no customers, no product names, no constraints

-

Uniform sentence pattern: every bullet has the same cadence, polish, and level of abstraction

A real candidate usually writes unevenly. One bullet is sharp. Another is clunky. One detail is too specific. That inconsistency is often a human signal. Lazy AI smooths everything into the same corporate mush.

Before and after examples

Bad AI-style bullet:

- Vague: Collaborated across functions to drive strategic initiatives and optimize operational efficiency.

Better bullet:

- Specific: Worked with product and support to cut ticket backlog by rebuilding the onboarding flow in React and updating help docs.

Bad AI-style bullet:

- Inflated: Spearheaded data-driven decision-making to enhance stakeholder outcomes across multiple functions.

Better bullet:

- Owned: Built a Looker dashboard for the sales team so managers could spot stalled deals without waiting for weekly reports.

Bad AI-style bullet:

- Generic: Improved platform performance through scalable engineering best practices.

Better bullet:

- Verifiable: Reduced API latency by rewriting slow database queries and adding caching for high-traffic endpoints.

That is the authenticity paradox in practice. AI can help a candidate tighten wording. It cannot replace lived detail without exposing the shortcut. The problem is not assistance. The problem is borrowed language with no evidence behind it.

The resume sounds promoted past reality

This shows up constantly in tech hiring.

A junior engineer claims to have “owned platform strategy.” A mid-level product manager says they “drove enterprise transformation.” A support analyst “orchestrated cross-functional alignment.” Those phrases are not impressive. They are suspicious when the title, timeline, and company context do not support them.

Match the verbs to the level of the role. Early-career candidates usually implemented, debugged, documented, shipped, tested, handled escalations, or maintained a specific area. They did not suddenly become the strategic center of the business.

If the language outruns the career history, assume the candidate used AI to inflate, not clarify.

For candidates, the fix is simple. Use AI as an editor, not a ghostwriter. Start with real work, then sharpen it. Good resume bullet points that show ownership and outcomes sound stronger because they are easier to verify.

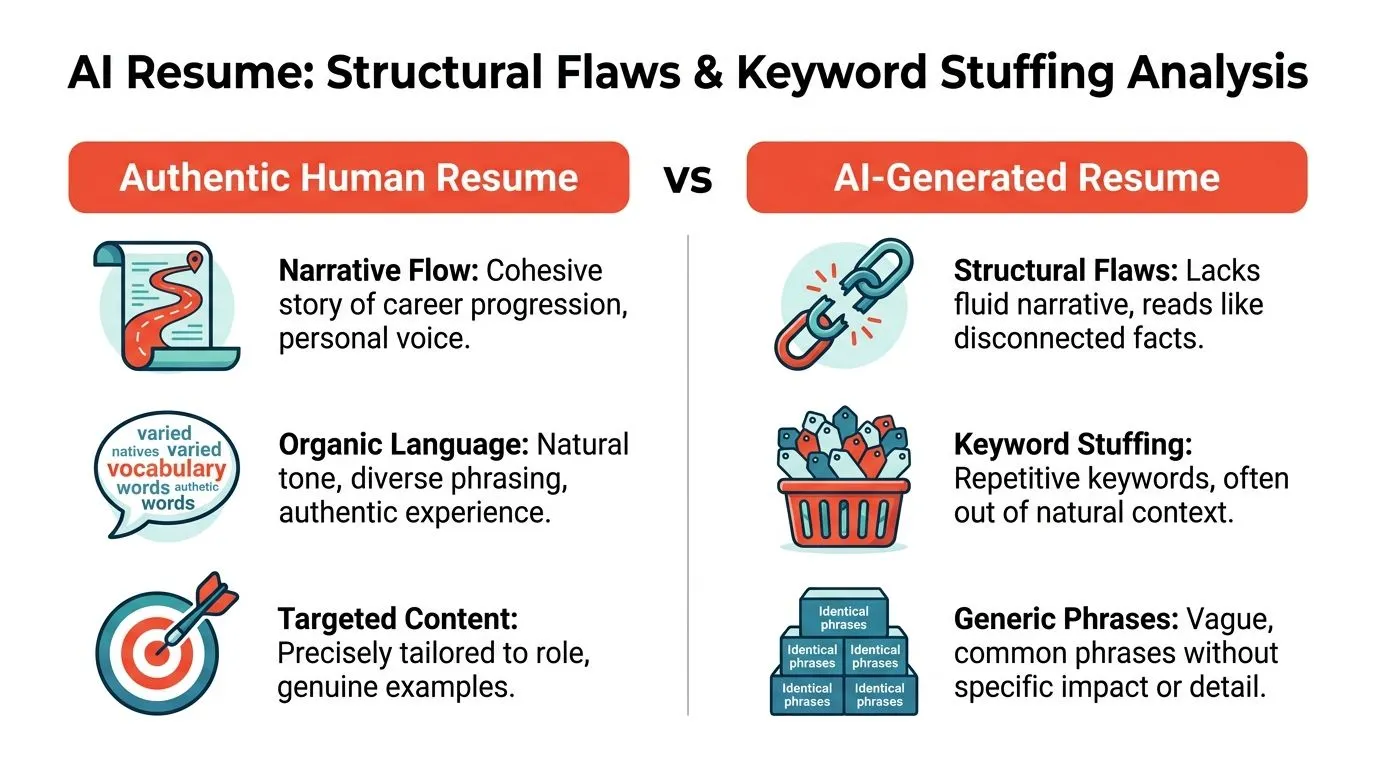

Analyzing Structural Flaws and Keyword Stuffing

The obvious red flags are easy. The harder cases look polished.

That is where structure matters.

Human resumes have flow

A strong resume feels like one person lived one career.

The story moves. Role A explains Role B. The candidate’s choices make sense. Skills appear where they were earned, not dumped into a giant keyword pile.

AI-heavy resumes break that flow. They read like disconnected facts assembled to satisfy a scanner.

You will see:

| Resume trait | Authentic signal | Suspicious signal |

|---|---|---|

| Career progression | Responsibilities grow logically | Every role sounds equally strategic |

| Skills usage | Tools appear in context | Skills repeated in every section |

| Achievements | Distinct by company and role | Same tone and structure throughout |

| Relevance | Fitted to the job | Mirrors the job description too closely |

Check the job description match

This is one of the most useful tests.

A practical rule is to compare the resume against the job description. If over 30% of the achievement bullets match the job description’s phrasing exactly, that is a strong signal of heavy AI assistance or templating, according to Willo’s guide to detecting AI-generated resumes. That same source warns that AI detectors can falsely flag up to 20% of human-written resumes in jargon-heavy fields like tech.

That is why keyword matching alone is not enough. But it is still a good suspicion trigger.

What keyword stuffing looks like in tech resumes

Not all ATS optimization is cheating. Good candidates should reflect the language of the role.

The problem starts when the resume becomes a mirror.

Examples:

-

Natural tailoring: “Used Python and SQL to automate weekly reporting for finance.”

-

Stuffed tailoring: “Python, SQL, reporting automation, data analysis, business intelligence, stakeholder communication, dashboard optimization.”

Or this:

-

Natural: “Built CI/CD workflows in GitHub Actions for a Node.js service.”

-

Stuffed: “CI/CD, DevOps, GitHub Actions, deployment automation, software delivery, release management, engineering excellence.”

One sounds like work. The other sounds like search engine bait.

ATS-friendly is good. ATS-obsessed is obvious.

Look for hidden games

If a resume scores well against the posting, inspect the file.

Plain-text conversion exposes a lot. Copy the content into Notepad or another plain-text editor. Hidden junk appears there first. White-on-white keywords, prompt fragments, weird spacing, and metadata tricks are not creativity. They are cheating.

The candidate may still be qualified. But once the resume starts trying to game the system instead of informing the reader, your skepticism should go up fast.

The Human Verification Workflow You Need

Resume detection is useful. Resume verification is what protects your hiring process.

A candidate can beat a scanner. They usually cannot fake depth for long.

AI-generated resumes achieve job description match scores 25 to 40% higher than human-written ones, but 60 to 70% of those candidates get caught in inconsistencies when asked to explain their metrics or decision-making in detail, based on Breezy’s write-up on AI resume cheating.

That tracks with real hiring experience. The resume gets them in the room. The conversation breaks the illusion.

Use a two-step interview test

First, pick one claim that looks impressive.

Then make the candidate unpack it.

Do not ask, “Tell me about this project?” That is too easy. Anyone can recite a polished summary.

Ask questions that force ownership:

-

What tradeoff did you make?

Real contributors remember what they could not do, what they postponed, and what they argued about. -

How did you know it worked?

If they cite a metric, ask how it was measured, where it came from, and what changed after launch. -

What broke the first time?

People who did the work remember friction. AI-generated bullets skip friction because friction sounds messy. -

Who disagreed with you?

Real projects involve tension. If every answer sounds smooth, the candidate may be reciting fiction. -

What would you do differently now?

Reflection is hard to fake.

Watch for answer quality, not performance style

Some candidates are concise. Some ramble. That alone means nothing.

What you want is specific recall.

Good answers include:

-

Real artifacts: dashboards, incidents, tickets, sprint tradeoffs, customer complaints

-

Decision logic: why this path, not that one

-

Boundaries: what they owned versus what the team owned

-

Plain speech: less theater, more memory

Bad answers collapse into abstractions. “We aligned stakeholders.” “We used best practices.” “We optimized the process.”

That is not an answer. That is residue from the resume.

Here is a useful sanity check. If you are validating outreach-heavy or sales-adjacent backgrounds, basic identity checks matter too. Something as simple as email address verification can help confirm whether the contact footprint around a candidate or company is even real before you waste time on polished nonsense.

A lot of hiring teams try to solve this with tools. I would rather solve it with better questions. The interview is still the best detector.

For candidates, this is also why rewritten resumes work best when they are built from conversation, not blind prompting. The best resume rewrite services do not just polish text. They extract proof. That is the standard hiring managers should expect, and it is also why services built around narrative interviews tend to produce more defensible resumes than simple generators. A useful benchmark is this overview of what to expect from the best resume rewrite service.

A quick explainer on interview-based screening sits below.

The best anti-AI workflow is not a detector. It is a hiring manager who can ask one level deeper than the resume.

How to Prove Your Resume Isnt from a Robot

Candidates do not need to avoid AI. They need to control it.

The underlying problem is not assistance. It is outsourcing judgment. If AI helped you tighten wording, fix structure, or cut repetition, fine. If it invented seniority, inflated impact, or pasted in claims you cannot defend, expect that to surface fast in screening.

A recent video on AI and resume transparency explains that recruiters now ask candidates about AI use directly, which means you should be ready to answer the question plainly: what did the tool help you do, and what parts are entirely yours? Watch the video on AI use and resume transparency.

So, how to make resume bullet points sound human not AI?

Use AI to edit evidence, not manufacture it

Start with raw facts from your actual work:

-

I migrated our analytics stack from Universal Analytics to GA4

-

I worked with one data engineer and two marketers

-

We had inconsistent event naming

-

I wrote the tracking plan and QA process

That input gives AI something useful to work with. It can tighten the language, improve flow, and remove clutter.

Now compare that with prompts that invite fiction:

-

Make me sound more strategic

-

Rewrite my background for a senior role

-

Add stronger business impact

Those prompts are how candidates end up with polished nonsense. The wording improves. The truth gets weaker.

Make every bullet interview-proof

A credible resume survives contact with questions. Use this check before you apply:

-

Can I explain how I did this without memorizing a script?

-

Are these metrics mine, or am I borrowing team results?

-

Would my former manager recognize this as an accurate description?

-

Does this sound like my language, or like a chatbot trying to impress someone?

If a bullet fails any of those tests, fix it.

That is the authenticity paradox. Smart candidates can use AI and still look more credible than candidates who avoid it, because they use it to clarify real work instead of covering weak evidence with polished language.

StoryCV helps people clear that bar by turning messy real experience into sharp, defensible resume writing. It works more like an interview than a template, which is the right approach if you want a resume that sounds clear without sounding fake. See how it works at StoryCV.